« August 2005 |

Main

| November 2005 »

Diploma Project: Robust Feature Point Extraction and Tracking for Augmented Reality

On Tuesday 20th September, Bruno Palacios (Erasmus student coming from UPC-Barcelona) presented his diploma project entitled Robust Feature Point Extraction and Tracking for Augmented Reality supervised by David Marimon and Prof.T.Ebrahimi. This work enhances the already developed AR system using more robust tracking techniques.

Abstract of the report________

For augmented reality applications, accurate estimation of the camera pose is required. An existing video-based markerless tracking system, developed at the ITS, presents several weaknesses towards this goal. In order to improve the video tracker, several feature point extraction and tracking techniques are compared in this project. The techniques with best performance in the framework of augmented reality are applied to the present system in order to prove system’s enhancement.

A new implementation for feature point extraction, based on Harris detector, has been chosen. It provides better performance than Lindeberg-based former implementation. Tracking implementation has been modified in three ways. Track continuation capabilities have been added to the system with satisfactory results. Moreover, the search region used for feature matching has been modified taking advantage from pose estimation feedback. The two former modifications working together succeed in improving system’s performance. Two alternatives to photometric test, based on Gaussian derivatives and Gabor descriptors, have been implemented showing that they are not suitable for real-time augmented reality. Several improvements are proposed as a future work.

Results_____________

Scene augmented with a virtual red square in front of a real monitor (click on image for bigger resolution).

Posted by david.marimon at 17:38

XjARToolkit - How should it work (end user example)

-

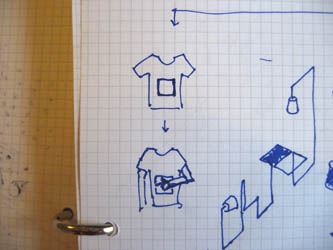

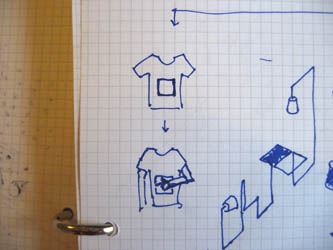

Step 1: any user can create a content (mostly small pictures, vidéo, text or sound file created with its cellphone or computer)

Step 2-3: he send/upload/send his file to a networked database while at the same time associating it to a visual sign (through a picture of the sign or through a code of it)

Step 3: the signs themselves can have different status, being used alone or creating a pattern.

Step 4: any user could access to any of the AR content through the camera observation of the signs. Access would ideally be possible through cellephones, pda, laptops, portable game stations, origami-like computers, etc. (only possible through laptops today which doesn't make so much sense)

We must also stress the point that the visual signs for the camera can be used alone, in groups (to create new and bigger signs) or as patterns. This can lead to the creation of visual simple products that could surround the AR application and be "AR-Ready" products bringing all the network, media & interaction possibilities to traditional static supports.

These products could be paper, post-it, wallpaper, stamps, books, t-shirts or fabrics for clothes, badges, stickers, etc.

Posted by patrick keller at 16:16

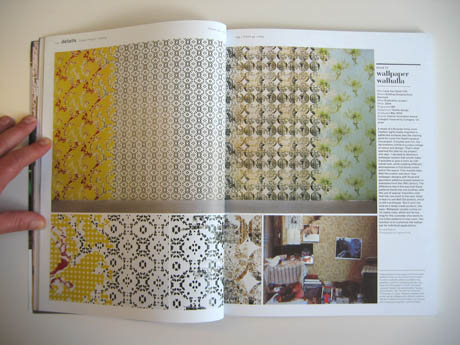

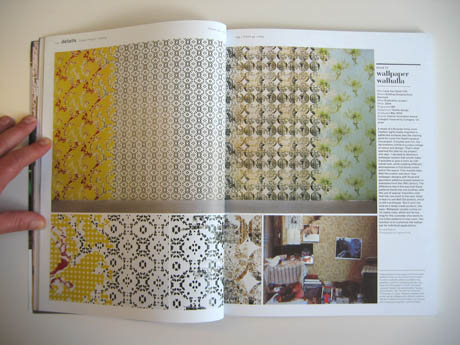

FRAME Magazine, May/June 2005.

Two diploma works that were published in the May/June 44th number of FRAME. First one by Clay McLaurin from Rhode Island School of Design deals with fabrics & mix of (old & decorative) patterns. Second one are wallpapers by Lene Toni Kjeld from Kolding Designschool.

They both interests me for the mix they are made of. In particular the wallpapers for their potential to create several sub-spaces & period of time within a single space, without really building anything.

-

-

-

Posted by patrick keller at 11:59

Conditioning, Actar Edition, Barcelona 2005

Conditioning, the design of new atmospheres, effects and experiences: un livre intéressant sur des approches spatiales contemporaines à l'ère de l'artificiel.

-

Posted by patrick keller at 11:46

Urban Screens 05

Un festival abordant la thématique de la présence des écrans (interactifs ou non) dans l'espace urbain. Le site s'accompagne d'un blog traitant de la même thématique.

Festival's website & blog HERE or THERE.

-

Posted by patrick keller at 15:43

we-make-money-not-art

Le blog "we-make-money-not-art" référence de nombreux projets et recherches intéressants dont une large majorité intègrent des technologies récentes ou à venir.

Une section regroupe des projets en "réalité augmentée", à parcourir dans le cadre de notre projet.

Le blog regroupe également d'autres catégories dont celles du Design ou de l'Architecture, elles aussi remplies de projets plutôt intéressants.

-

Posted by patrick keller at 11:24

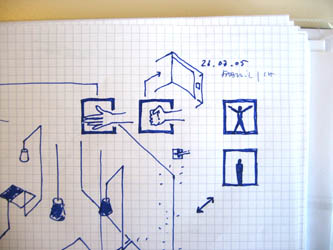

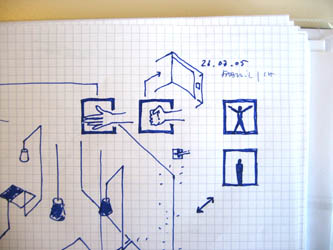

Démonstrateur pour nouvelles fonctionnalités soft AR

Un peu déconnecté du projet général, mais pourrait être un exemple d'une installation simple en cours de première année pour démontrer/tester différentes fonctionnalités du logiciel AR redéveloppé par l'EPFL (filtres) et fabric (extensions VRML et tangible interfaces).

A des phrases décrivant des actions s'associe les actions elles-mêmes dans l'espace réel ou augmenté/hybride.

Pour rappel des objetcifs liés au logiciel lui-même, on cherche: un meilleur affichage des objets 3d (plus de noeuds VRML supportés, gestion des événements 3d et des événements caméras), étendre le soft vers un déclancheur d'actions dans l'espace physique et de l'*event tracking*, y ajouter des possibilités de tracking simple.

-

Posted by patrick keller at 10:23

ARToolkit + openVRML Software Redesign = XjARToolkit

Within this project and our collaboration with EPFL's Signal Processing Institute, we are working with an open source Augmented Reality software named ARToolkit combined with openVRML. The STI laboratory is developing a signal processing project (camera vision) within the frame of this open source AR project so to improve its performances and give a context to their vision development.

We target that the use of the software within the frame of this project will be mainly on mobile devices (cellphones, portable game stations, pda, tiny laptops, origami-like computers, etc.) even if it is not possible nowadays.

As interaction designers, we emit some reserves about several aspects in the design of this open source combined software (rendering and behaviors):

__it is based on an outdated VRML open source 3d engine (openVRML, not updated anymore) which dramatically limits how you can interact with the 3d and how it is rendered.

__you can only play with static information (static 3d in this case) and you are in a way either too far away of it on a small screen (the 3d object is too small, no details) or too close (you see a fragment of the object).

__the marker (AR sign) should always be fully viewed.

__it has no interaction capacity and is clearly limited to visualization. It therefore doesn't take into account the potential of interaction with the *camera signs* (the software knows where the camera is when it looks at a "camera sign") or even with the added 3d.

-

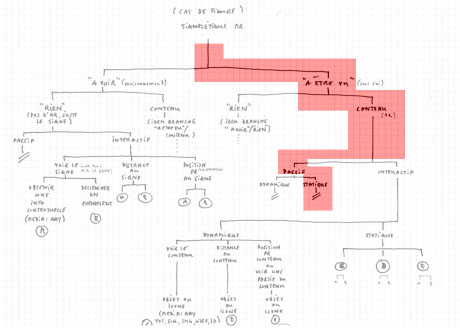

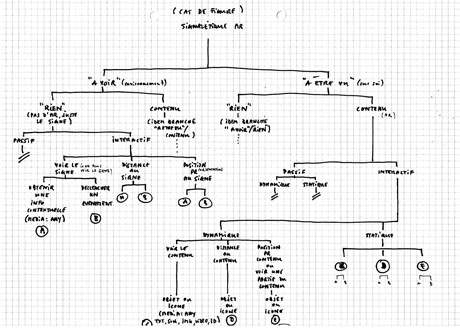

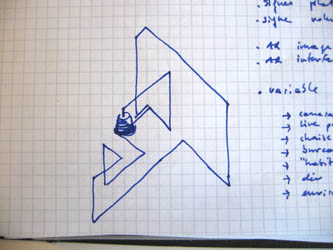

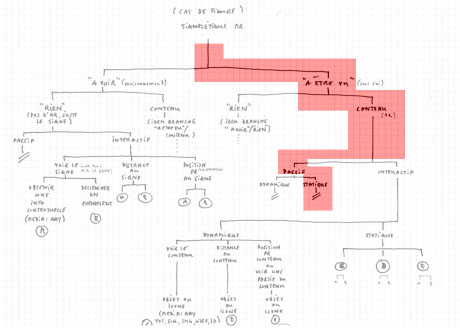

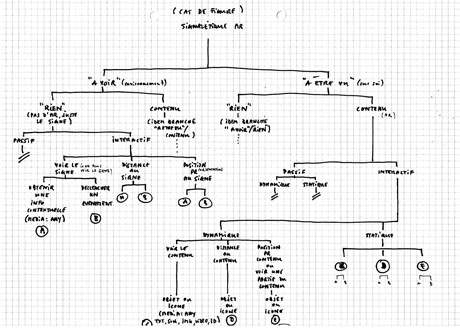

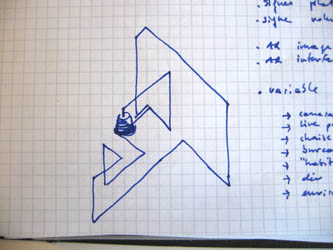

Sketch of ARToolkit & openVRML's interaction tree (red part) on top of XjARToolkit (see below) planed interaction tree:

We propose to add some features and to provide a redesigned version of this combined software that will be labeled XjARToolkit (see the scenario to redesign the open software):

__we will work with Xj3D as a 3D engine (open source as well). This is a more actual open source real-time 3d format. This 3d engine covers VRML as well as X3D and it has already much more functionalities implemented than openVRML. This will open the capabilities of 3d interaction. Xj3D is also still in development and is targeting to have a player for cellphones as well in the future.

Xj3D is java based.

__As Xj3D is java based, we will connect it to jARToolkit which is a java binding of ARToolkit.

__We will integrate the work of David Marimon (EPFL, Signal Processing Institute) to have a better stability of the 3d integration and a better vision tracking treatment. This will enable us to have interaction with the relative position/orientation of the camera compared to the tracker/sign.

__We will extend the toolkit with another open source software (Rhizoreality) so to connect it to network potential (dynamic content, multi-user, etc.) and to open interaction possibilities with other devices or objects (like television, lights, remote controls, etc.)

Note that we don't target a specific AR application, rather an array of applications some of which being new ways of using such software (no AR in fact, just interaction with camera and their relative positions in relation to AR signs, opening up the fuctions to a kind of spatial interface software). In this sense, the new extended toolkit should allow to produce plentyful of different contents and behaviors in a playful way. In some cases, the content should be created by any end-user (like an sms or mms application) while in other, it could become professional and task specific applications.

XjARToolkit should become a framework for that.

-

Sketch of XjARToolkit's (as a Rhizoreality.mu client) extended interaction & behaviors tree:

Note that this "Interaction tree" should have in the end a relation with the "Tree of AR signs".

Posted by patrick keller at 9:35

Summer sketches & possible scenarios for october 05 - june 06

-

-

Tatiana, Adrien et Bram (les assistants de l'Ecal pour le projet) avaient lancé l'idée de travailler avec le corps et ses différentes postures comme signes potentiels lors du premier *workshop*.

En prolongement direct de cette idée, travailler simplement avec les mains et définir par exemple le cadre du signe sur un t-shirt (pour rappel, chaque signe reconnu par la caméra et le logiciel d'AR --Augmented Reality-- doit être inclus dans un cadre carré). La combinaison de ce t-shirt contenant le "cadre" avec des signes réalisés par les mains devant une caméra pourrait permettre de créer le premier t-shirt servant de télécommande...

Ceci pourrait être mis en parralèle avec le développement d'un projet "pins", basés sur le langage graphique développé par Tatiana et qui permettrait là aussi de customiser des vêtements (vêtement *souvenirs de chez soi* pour longs voyages...).

-

-

-

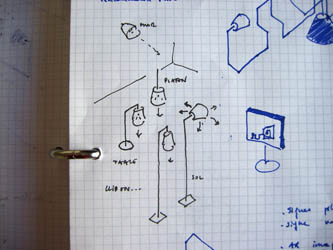

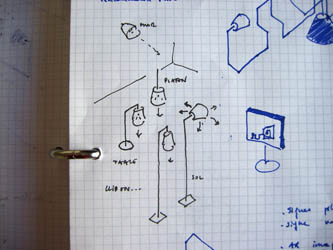

Développer l'/les objet/s caméras vers des morceaux d'espace ou des micro-spatialités. Réduire la matière au profit de la stimulation d'une perception. Signe ou icône d'espace pouvant cumuler différentes fonctions (illumination, spatialité, etc.)

-

Développer une ligne de *caméras abat-jour*? En allusion directe à tous les types de systèmes d'éclairage qu'on peut trouver dans des environnements intérieurs (suspendu, sur pied, au mur, sur table, de chevet, etc.).

-

Associer ces différentes approches (papier peint, langage graphique, objets caméras-spatiaux-lumineux, caméras domestiques, objets 2d-3d, ...) dans la constitution de micro-spatialités (ici, cela évoque probablement un peu trop une situation de type *living room*).

-

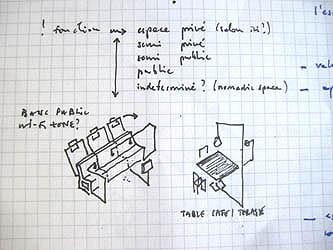

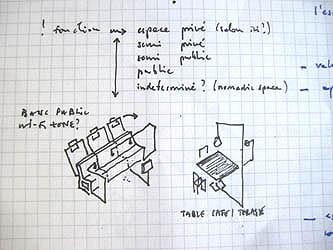

-

Poser la question suivante (soulevée par Alexis Georgacopoulos lors de notre dernière réunion): celle de l'affectation générale de l'espace sur lequel nous travaillons. Initialement, nous voulions travailler sur l'espace urbain public, à l'extérieur ou habrité (type wi-fi zone). Le workshop *Chambre d'hôtel* nous a ramené à l'intérieur, pour l'instant dans un espace de type domestique (et donc plus tellement public).

Posted by patrick keller at 16:46