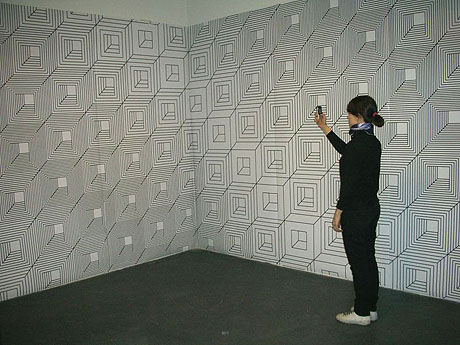

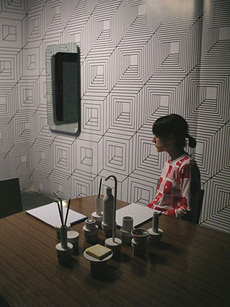

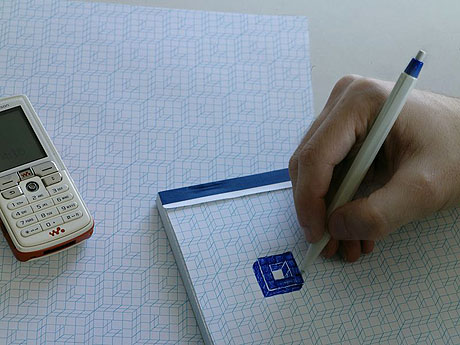

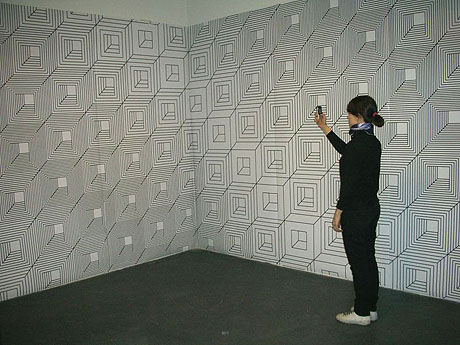

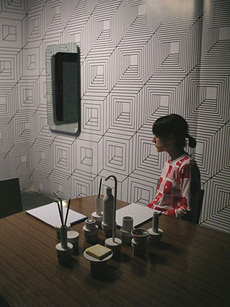

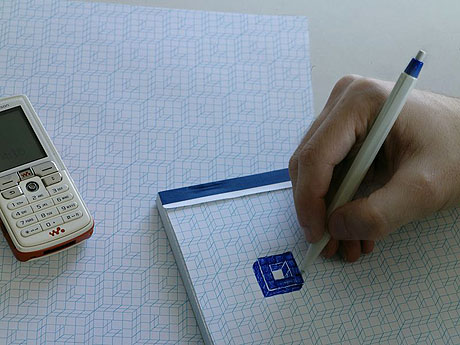

Variable_environment: prototypes photo shoot process

-

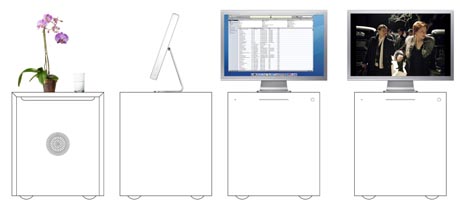

We have produced a set of prototypes for the artifacts we were working on for some time now. Things like the "AR-ready patterns & objects", the "Webcamera - mirrors (& light)", the "Rolling micro-functions (for Sohos)", etc. Objects that can use or interact with softwares like XjARToolkit or VTSC (and others --skype, etc.--).

-

We are now in the process of shooting pictures of them. The overall set of pictures will describe a sort of (little bit visually) annoying space, made of artifacts that look traditional on first sight (wallpapers, mirrors, table, etc.) but that have a discrete second or third function. A marker based room which functions can potentially evolve over time, be (inter-)/re/active and/or endlessly customizable, evolve from a private atmosphere to a more public one. A space where also most of its content remains invisible to the human eye but can be seen through (cellphone, handheld, fixed) camera. It could be a "one room somewhere -including a film set-- where somebody live and work".

-

Here are some "making of" pictures of the photo shoot to give a first impression. We expect to finalize the variable_environment research project (phase 1) for the end of the month. Hopefully...

-

-

-

-

-

Posted by patrick keller at 11:53

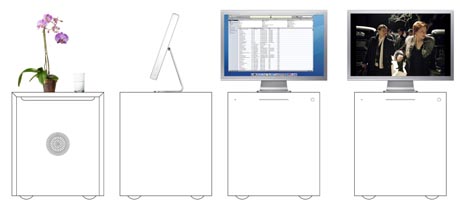

Webcam mirrors uses

Following Aude's post, I would like to underline the fact that any webcam application could potentially be used in conjunction with these objects (such as skype, or to post the image "memory" of the mirror on the internet --i.e blog, flickr--, to use the variable_environment's softwares like tracking of spatial configurations, augmented reality, etc.).

It still has to be decided if a webcam mirror can do everything (possibly support "any" program like a "any webcam") or not (the mirror has a specific function). Additional computed visual function of the lamp will possibly be used for tracking only (remote control kind of function). In any case, if a computer is needed, this will be the "computer bedside/low table".

Posted by patrick keller at 17:27

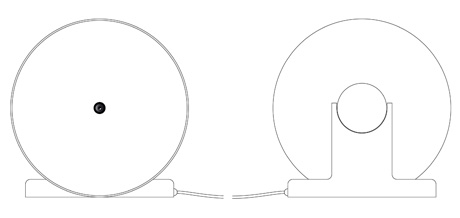

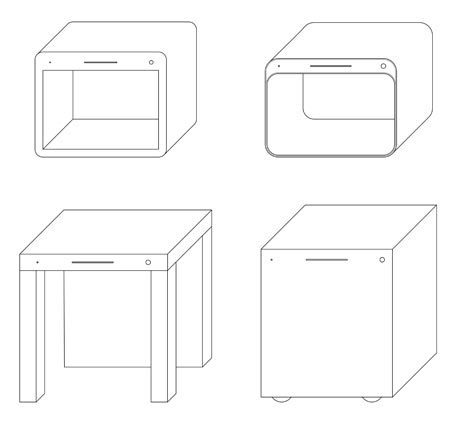

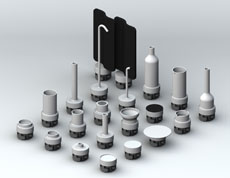

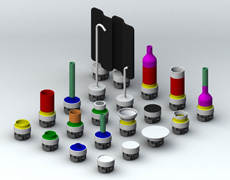

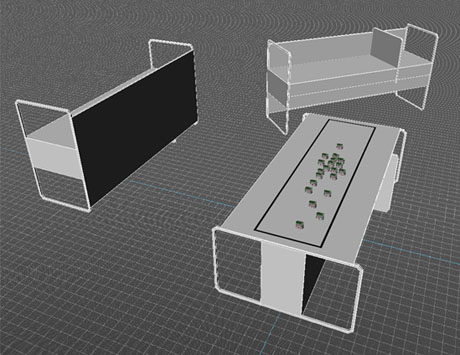

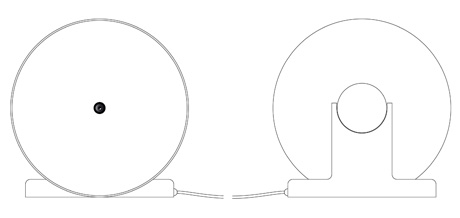

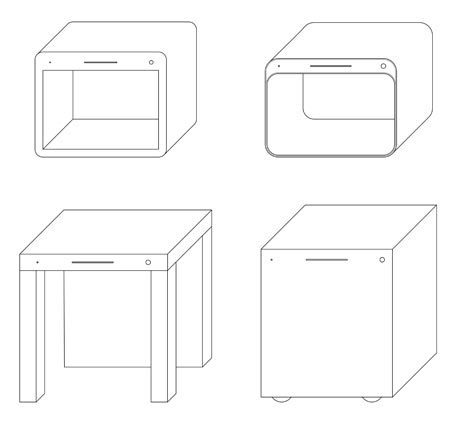

Webcam Objects (models)

Webcam objects, as they are designed for this project, have a strong, simple and slightly unusual identity.

The following images are taken from the work-in-progress, before producing real scale / real material objects.

-

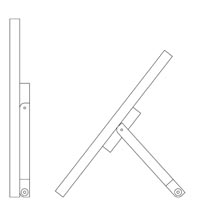

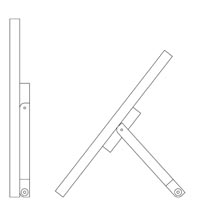

Model of the webcam lamp (still made out of cardboard on this picture) fixed to the ceiling.

The cylindre is 40cm of diameter and 40cm hight.

It integrates 5 halogen's lights and a webcam (central hole).

-

Colapsible Table Mirror works with a laptop.

-

Furniture Computer (computer "bedside/low" table)

Posted by aude at 17:08

Smart mobs & camera

Tiré du blog *Smart mobs*, quelques lignes sur les caméras de surveillance dans l'espace public:

This USA Today article says that in "U.K. public places, smarter closed-circuit TV cameras have been given the ability to listen for disturbances and also keep an eye on citizens.The system has already been put into use in the Netherlands to listen for people speaking in aggressive tones, to try to counter violent attacks in Dutch streets, prisons and railways.

The aggression detector has been fitted to CCTV cameras on the streets of Groningen and Rotterdam in the Netherlands. In the U.K., London police also are considering installing the system, said Derek van der Vorst, the director of Sound Intelligence, the company that created the technology.The system works by putting microphones in CCTV cameras to continually analyze the sound in the surrounding area. If aggressive tones are picked up, an alarm signal is automatically sent to the police, who can zoom in the camera to the location of the suspect sound and investigate the situation".

-

'Big Brother' cameras listen for fights

Posted by patrick keller at 9:48

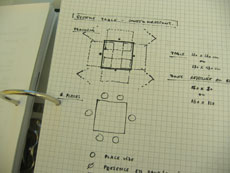

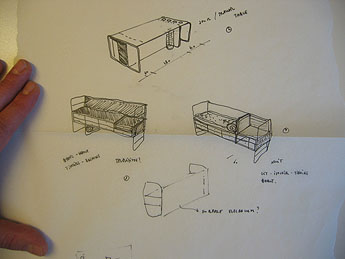

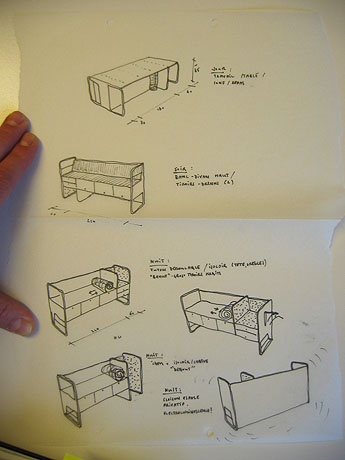

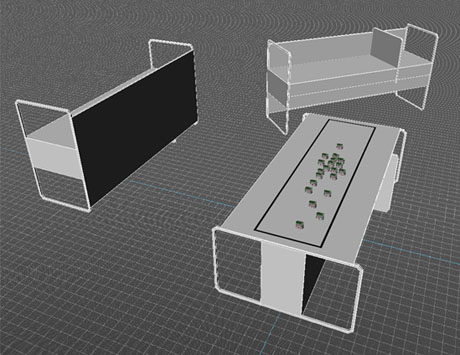

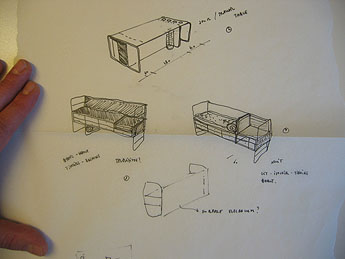

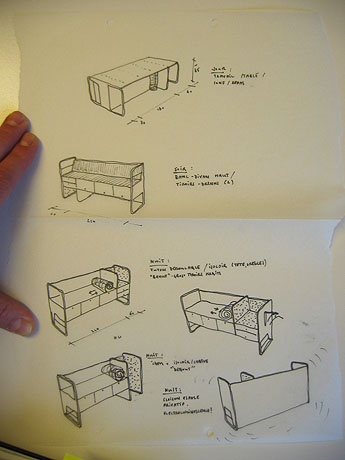

Multi-functions / hybrid table sketches

At one point during the Workshop#4 process, we needed a long table to realise some live tests. We thought about producing a table that would match our "one room to leave, work, eat, entertain, etc." concept. An hybrid and multi-functional table that would prolong some of the Workshop#3 results.

Following these early abstract proposals done by Aude Genton during Philippe Rahm's workshop, I quickly tried some further sketches for that "hybrid functions" table (bed, table, cooking area, settee, cupboard, drawer, reception desk, boudoir, closet, ... --see below--) that could be used on all of its sides.

As we were not really convincing with those early sketches (looked a bit like an unpractical refugees camp table), we finally decided not to go further with that project and use a regular (and smaller) table for our tests...

-

Posted by patrick keller at 14:12

VTSC System - Testing

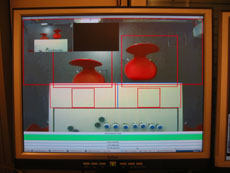

VTSC (Visual Tracking of Spatial Configurations) was successfully installed and launched on a Mini Mac running Windows XP through the use of Bootcamp. VTSC software will be necessary to achieve a spatial tracking for workshop#4

The tested configuration includes the use of one Mac Mini, 4 USB webcams from Logitech (Quickcam for Notebook Pro), a USB Hub and a heterogeneous set of cable length for each connected webcams (see picture below).

The very last driver from Logitech (version 10.X) seems to generate problems (kernel using 100% CPU time and JMF crash when accessing to a webcam video stream). These problems can be avoided by using a driver version 9.X. The moderator was also running on the same Mini Mac without major frame rate loss.

Mini Mac may be the system that will be used for the final setup, when the connection with SWIS laboratory - EPFL's e-puck controller will be performed.

The Logitech's Quickcam pro also serves us as a base for the (web)camera objects.

-

Posted by fabric | ch at 14:58

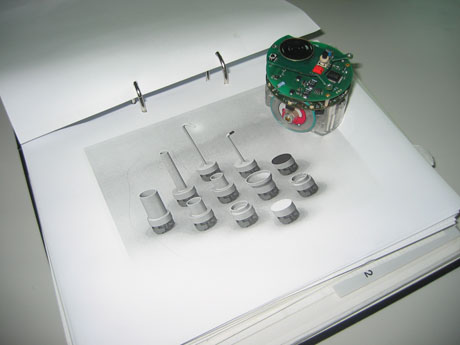

Video Tracking System of Spatial Configurations

The very first version of the "Video Tracking of Spatial Configuration (VTSC)" system developed by fabric | ch was delivered to Julien Nembrini from the EPFL. The system allows to control an unlimited set of volumes in a given space and allows to detect if a given volume is filled or not. A volume is obtained by a set of different USB webcam's point of views (shooting the same room from distinct locations for example). Intersections of defined zones in these point of views define volumes.

.

The system is working with a set of basic USB webcams. Each USB webcam is controlled by a dedicated application. The application managed 2D zones that must be monitored in the image obtained from its associated webcam: it detects if a zone was activated or not (filled). All these applications are networked (meaning that the controlled volumes can even be in distinct remote locations), all information centralized to a main controller application known as the moderator. The moderator is filtering received information and decides if a volume is activated or not (filled).

.

Within the framework of this project, a volume activation will suggest epfl's e-puck robots to organised themselves in a given configuration.

-

As the system is networked based, it can be deployed in a very convenient way. The number of involved USB webcams, the number of needed computers, the location of these computers can be adapted very easily to any kind of project/configuration. As mentionned previously, it is even possible to combined the monitoring of volumes that are not at the same location in order to control something else in another distinct location. It can also be easily integrated in the Rhizoreality system developed by fabric | ch..

.

A set of tests we have made has raised a set of limitations/observations to take in consideration while deploying a VTSC configuration. We have successfully plugged 4 USB webcams on the same computer (PC laptop, desktop) by using a USB HUB. Of course, application in charge of controlling a given webcam must be installed and runned on the same computer as the webcam (the video stream is not broadcasted). So one basic computer was able to host 4 applications for video image analysis and the moderator without any major frame rate loss.

One must kept in mind some USB limitation linked to cable length (around 10m max.). It should be possible to connect the webcam with a longer cable through the use of USB repetor or USB to RJ45 convertor but these options were not tested or used within the frame of this project.

Of course the more powerful the host computer is, the more it should be possible to connect webcams, keeping in mind that the USB bus has its own limitation in term of bandwidth which should of course restrict the number of camera that can be connected to the same computer without video signal or major frame rate loss.

.

The system is based on JAVA but video signals are accessed through DirectX, so VTSC is condemned to run under Windows. Things can evolve in time, through the change of the webcam's video signal access module.

Posted by fabric | ch at 10:56

Exemple d'utilisation du Tracking Vidéo (VTSC)

Cet exemple est basé sur l'usage d'une table "architecturale" multi-usages de type Joyn (design: R. & E. Bourroulec, éditeur Vitra) de 540x180. Les différentes dimensions des tables de types Joyn nous serviront pour la suite du projet. Il ne s'agit en effet pas de développer des tables dans le cadre de cette collaboration avec le laboratoire SWIS (collaboration dans le cadre du Workshop_04), mais bien essentiellement une "collaboration" entre des robots de petites tailles (e-puck) et des utilisateurs dans un contexte de micro-spatialité.

.

Les robots e-puck de l'EPFL pourraient évoluer à l'intérieur d'une zone délimitée par une ligne noire posée sur la table (parallélépipède rouge sur l'image). La ligne noire doit pour l'instant être présente pour sécuriser l’évolution des robots. Ces derniers dépendent d’un tracking vidéo dédié pour contrôler leurs mouvements sur la table. En cas de panne de ce système, les robots pourraient tout simplement tomber de la table en continuant un mouvement qui n’a pas été invalidé par le système de tracking vidéo dédié. Ces robots sont actuellement partiellement autonomes, mais toutes les recherches sont dirigées pour qu’ils deviennent totalement autonomes (ne plus dépendre d’un système de tracking vidéo externe).

-

Un ensemble de caméras (webcam USB) permettent de contrôler un ensemble de volumes autour de la table mais également sur la table : une chaise est elle occupée, un intervenant a-t-il posé ses coudes sur la table, ou bien juste une main etc… Suivant les volumes occupés ou non cela suggère aux robots certaines activités : ils se déplacent, s’organisent afin de former une configuration de groupe dans le but de « servir » au mieux les intervenants.

.

Les volumes contrôlés par les webcams sont « naturels » en ce sens que leur occupation spatiale découle d’une activité humaine des plus classiques. L’apprentissage du fonctionnement de l’ensemble par l’intervenant est nul et peut reposer uniquement sur l’intuition et la perception de l’espace par ce dernier.

-

Posted by fabric | ch at 10:08

VTSC - Tech. Review

Video tracking systems are usually set up for object motion tracking or change detection. These systems are assumed to be able to run in real-time, e.g. analyzing a live video stream and giving the expected result straight forward without time delay.

The obvious main purpose of such systems are usually linked to video surveillance (persons, vehicles) or even object guidance (missiles).

PFTrack

Optibase

Logiware

A large set of academic (http://citeseer.ist.psu.edu/676131.html) and commercial references exists exploiting a well known set of distinct methods. Usually the best is the algorythm, the worst is its CPU print.

Commercial solution usually proposes very good solution while using dedicated hardware, making possible to have high performance algorythm running in real-time.

In the framework of this project, a set of pre-defined constraints must be taken in account:

-> The tracking system must interact with an existing robot control system developped at the EPFL

-> Low cost hardware may be used for cameras and computers (video streams analysis)

-> Several tracked area activations, issued from several distinct cameras, may be combined to make one decision validated or not

-> The number of cameras must be maximized (in order to obtain a maximum of tracked configurations) where the needed set of computers to perform video analysis must be minimized

This set of constraints excludes the use of any commercial solutions that may have an important costs as well as may imply problems to adapt itself to the describe experiment scope.

It disqualified as well open-source or freely available video analysis systems because of their lack of functionalities: none of the tested projects were able to deal with several cameras connected to the same host computer for example.

Some of them imply the use of a particular type of camera, compatible with some specific drivers only (WDM for JMyron).

By developing a highly networked system based on commonly used technology (Microsoft DirectShow) we will be able to use any windows compatible webcam without any particular limitation. It implies as well to be able to access to several camera video streams through USB from the same host computer.

The network layer will ensure that all video analysis data can be centralized to a dedicated application in charge of validating a given decision (ex: 3 persons are sitting around the table true-false?) as well as making available this information to the robot's controller application (EPFL), still through network.

The video analysis itself can be freely based on methods described in the numerous research papers found in the literature, making possible to choose from one method or another according to the kind of CPU print we can allow for the application.

New video tracking methods may even be included later, making possible to have a set of networked video tracking applications running a different video analysis algorithm each.

Posted by fabric | ch at 16:13